How Predictable is the Eurovision Song Contest?

The Eurovision Song Contest has long been a popular basis for betting, and popular fan websites such as Eurovisionworld collate the books from about a dozen different online bookmakers to make predictions about who will win the contest. The success of these sites is mixed: although Eurovisionworld’s particular compilation of bookmakers had identified the winner correctly in only two out of the last five contests by the evening of the final (2015 and 2019), if we use more flexible criteria such as the Top 5, the same site has on average identified 4 out of 5 of the Top 5 correctly.

But how possible is it to predict a Eurovision winner? The scoring system, for those less familiar with the contest, is rather elaborate. Every participating country has a professional jury, who rank the songs from every other country. The award their top pick 12 points (douze points), their second choice 10 points, their third choice 8 points, and then 7, 6, 5, 4, 3, 2, and 1 point, respectively, for the rest of their top 10. The remaining songs receive no points from that jury. These points from the juries are added up across all songs to determine the jury winner.

The general public in participating countries can also vote by telephone for their favourite songs, again from every country except their own. These votes are totalled per country, and in the same system as the jury, each country awards their favourite song 12 points, their second-favourite 10 points, then 8, 7, 6, 5, 4, 3, 2, and 1 point for the rest of the top 10. These televoting points are added up across all songs to determine the jury winner.

Finally, the jury points and the televoting points are added together to determine the total score for every song, and of course, the song with the highest number of points is proclaimed winner. The juries and televoters are usually far from completely in agreement with each other, either within countries or between countries, and so despite a theoretical perfect score of 900–1000 points in the current structure of the contest, the highest score under this structure so far has been 758 (Portugal’s ‘Amar pelos dois’ in 2017).

Because we know how many points each jury awarded each other country, however, and likewise for the televoters, we can make an estimation after the fact about the underlying quality of each song. We use a classical statistical technique called the exploded logit model . The key idea behind this model is that the higher-quality a song is relative to the other songs in the contest, the higher the probability that a country’s jury or televoters will rank it as number 1, and even if they don’t choose it as number 1, the higher the probability that they will then choose it as number 2, and so on. Because there is a qualifying semi-final round in recent years, we can take not only the jury and televoters preferences from the final, but also from these semi-finals in order to get the most precise idea of underlying song quality. In theory, it is impossible for bookmakers (or any other prediction system) to do better than the predictions of this exploded logit model on average: it is based on after-the-fact knowledge about how much the juries and televoters agreed with each other, and in a close contest, there is always a chance that juries and televoters might have switched their preferences between two songs of similar quality.

One way to look at the results of that model is to ask the simple question it is designed for: in a given year, what is the chance that any given country’s jury or televoters would have awarded a particular song douze points? We have a large graphic summarising that data here . Even in the case of strong favourites like Ireland’s ‘Rock ’n’ Roll Kids’ in 1994 or Great Britain’s ‘Love Shine a Light’ in 1997, the chances of a jury awarding 12 points was just under one in three; in an average year, the chance is only one in five. In this sense, it is quite unwise to wager on the results of Song Contest.

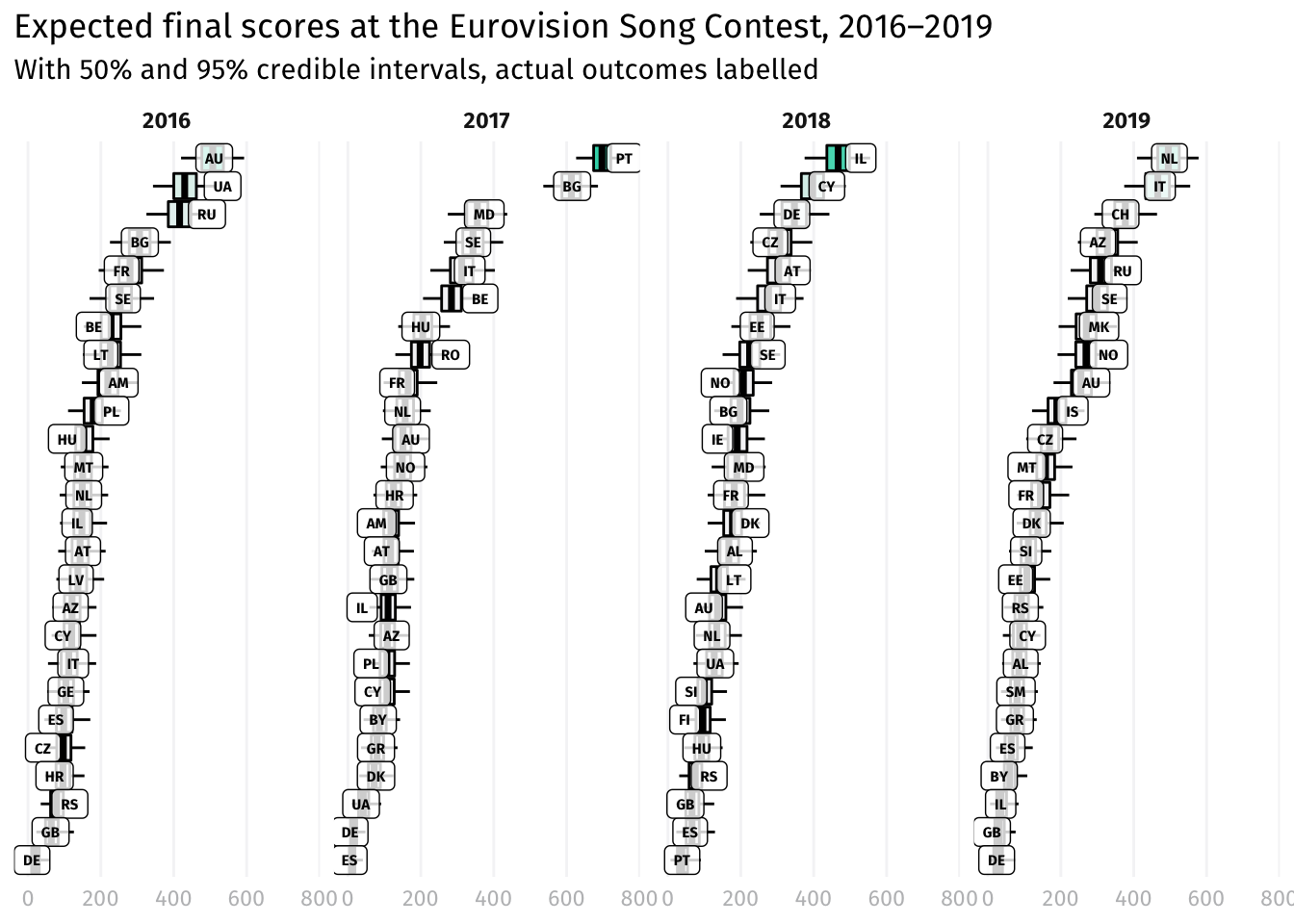

Aggregated over the 40-some juries who vote in recent years, however, and armed with the information about which songs have been excluded during the semi-finals, it is possible to do better. The following graphic illustrates the results of 8000 simulations of the Song Contest finals from 2016–2019 (the years that the current scoring system has been in place). The coloured boxes encompass half of the simulations, and the ‘whisker’ lines encompass 95 percent of the simulations; in other words, we should expect a country’s score to have fallen inside its box half the time and inside the whiskers 19 times out of 20. The country labels appear on top of their actual scores from the Song Contest finals, and indeed, we see that about half of them landed inside the boxes and about 19 out of 20 fell within the whisker lines.

If you had somehow been able to use these simulations to predict the winner, you would have been correct in four out of the past five years. The exception was 2016, where Australia’s ‘Sound of Silence’ had an 83 percent chance of winning according to these simulations, but Ukraine, with only a 10 percent chance of winning, pulled ahead. (The bookmakers were preferring Russia’s ‘You are the Only One’ that year, which would have had a 7 percent chance of winning.) It was the closest contest since the most recent scoring system has been introduced. The 2019 contest was nearly as close in terms of points and statistically even closer: the Netherlands’ ‘Arcade’ won in only 67 percent of the simulations, whereas Italy’s ‘Soldi’ won in 33 percent.

## Warning: The `size` argument of `element_line()` is deprecated as of ggplot2 3.4.0.

## ℹ Please use the `linewidth` argument instead.

Relaxing our success criterion to the Top 5, these simulations would not have done significantly better than the bookmakers: an average of 4.2 correct versus the bookmakers 4.0, a statistical tie. And this year the bookmakers are predicting an unusually close race, with Italy the favourite at the time of writing, followed by France, Malta, Ukraine, and Switzerland. Are the juries’ preferences really as divided as the bookmakers suggest? Watch this page for an update after the official results are released.

And in the meantime, test your memory of the Song Contest in our Hooked on Music experiment .